Table of Contents

Key Takeaways

- Why Run LLMs Locally? The Case for a Local AI Setup.

- System Requirements and Choosing the Right Model.

- How to Install Ollama and Run Your First Local LLM.

Cloud AI costs add up fast. OpenAI’s API charges per token, and subscription fees drain your budget every month. But what if you could run powerful AI models on your own hardware — offline, for free, with zero data sent to any external server?

That’s exactly what Ollama makes possible. This open-source tool lets you run LLMs locally with Ollama on Windows, macOS, or Linux in under five minutes. No API key required. No monthly bill. No privacy compromises.

In this guide, you’ll learn how to install Ollama, pick the right model for your hardware, and start chatting with a local LLM today. Whether you’re a developer building a private coding assistant or a curious user exploring AI without paying per query, this step-by-step tutorial covers everything. By the end, you’ll have a fully functional local AI running on your machine.

Why Run LLMs Locally? The Case for a Local AI Setup

Cloud AI tools are convenient, but they come with real trade-offs. Every prompt you send to a cloud provider is processed on a remote server. Your queries may be used for model training, reviewed by human annotators, or stored for months. For developers, businesses, and privacy-conscious users, that’s a significant risk.

A local AI setup removes all of that exposure. Your data never leaves your device. This matters most for developers working with proprietary code, healthcare workers handling patient notes, legal professionals with confidential documents, or anyone operating under strict data regulations like GDPR.

The cost argument is equally compelling. Cloud LLM APIs charge per token — costs that spiral quickly with heavy use. Ollama, once installed, is completely free under an MIT license. You pay only for the electricity your machine uses.

Here’s why thousands of developers choose a local AI setup over cloud alternatives:

- Complete data privacy — your prompts and responses never leave your device

- Zero ongoing cost — no API fees after initial setup

- Offline access — works without an internet connection once models are downloaded

- No rate limits — run as many queries as your hardware supports

- Full customization — adjust model parameters and system prompts freely

- OpenAI-compatible API — swap cloud providers for local models in existing apps

According to data from local AI communities, Ollama installation search volume exceeded 10,000 searches per month in early 2026, reflecting rapid growth in developer adoption. The shift toward local AI isn’t a niche trend — it’s becoming standard practice. Browse our how-to guides for more practical technology tutorials like this one.

System Requirements and Choosing the Right Model

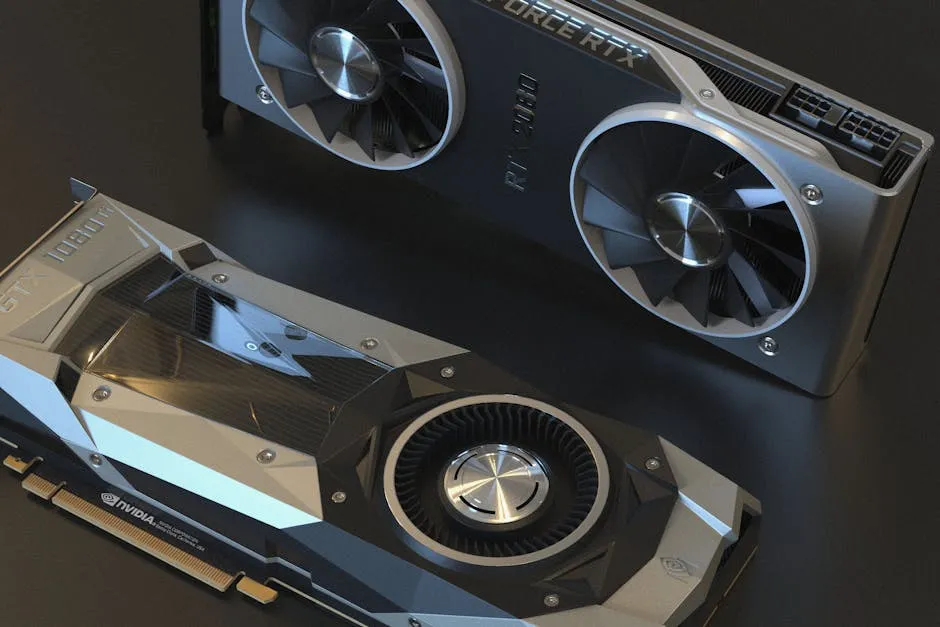

Before installing Ollama, check that your hardware matches your chosen model. The key metric is available RAM — or VRAM if you have a dedicated GPU.

A useful rule of thumb: budget approximately 0.6 GB per billion parameters at q4_K_M quantization. A 7B model needs 4–6 GB, a 13B model needs 8–10 GB, and a 70B model requires 38–48 GB. For most users on standard consumer hardware, the 7B and 8B class models strike the best balance of quality and performance.

Here’s a comparison of the top beginner-friendly models available through Ollama:

| Model | Disk Size | RAM Required | Best For |

|---|---|---|---|

| Llama 3.3 8B | ~4.9 GB | 8 GB | General chat, writing |

| Mistral 7B | ~4.1 GB | 6–7 GB | Fast responses |

| Qwen 3 7B | ~4.3 GB | 8 GB | Coding, multilingual tasks |

| Phi-4-mini (3.8B) | ~2.5 GB | 4 GB | Low-resource devices |

If you have an NVIDIA GPU with 8 GB+ VRAM, Ollama automatically offloads processing to the GPU. Expect 40+ tokens per second on mid-range cards — far faster than CPU-only inference. Apple Silicon chips (M1 through M4) also deliver excellent performance through native Metal GPU acceleration with no extra configuration required.

For this tutorial, we’ll use Llama 3.3 8B as the example — the recommended starting point for most users with 8 GB RAM or more.

How to Install Ollama and Run Your First Local LLM

Follow these numbered steps to get Ollama running on your machine. The entire process takes under five minutes on a typical broadband connection.

Step 1: Download and install Ollama

Visit ollama.com/download and grab the installer for your OS. On macOS and Windows, run the installer and accept the defaults. On Linux, paste this command into your terminal:

curl -fsSL https://ollama.com/install.sh | shStep 2: Verify the installation

Open a new terminal window and confirm Ollama installed correctly:

ollama --versionYou should see a version number like ollama version 0.6.x. If the command isn’t found, restart your terminal or reboot your machine.

Step 3: Pull your first model

Download Llama 3.3 8B from Ollama’s library. This requires an internet connection and downloads approximately 4.9 GB:

ollama pull llama3.3Ollama pulls pre-quantized models from its library at ollama.com/library, which hosts over 100 models. You only need to download a model once — it’s cached locally for all future use, fully offline.

Step 4: Run the model

Start an interactive chat session directly in your terminal:

ollama run llama3.3Type any message and press Enter. The model responds entirely on your local hardware. No internet required after this point. Press Ctrl+D or type /bye to exit the session.

Step 5: Manage your installed models

List all models downloaded on your system:

ollama listTo remove a model and reclaim disk space:

ollama rm llama3.3Using Ollama’s REST API

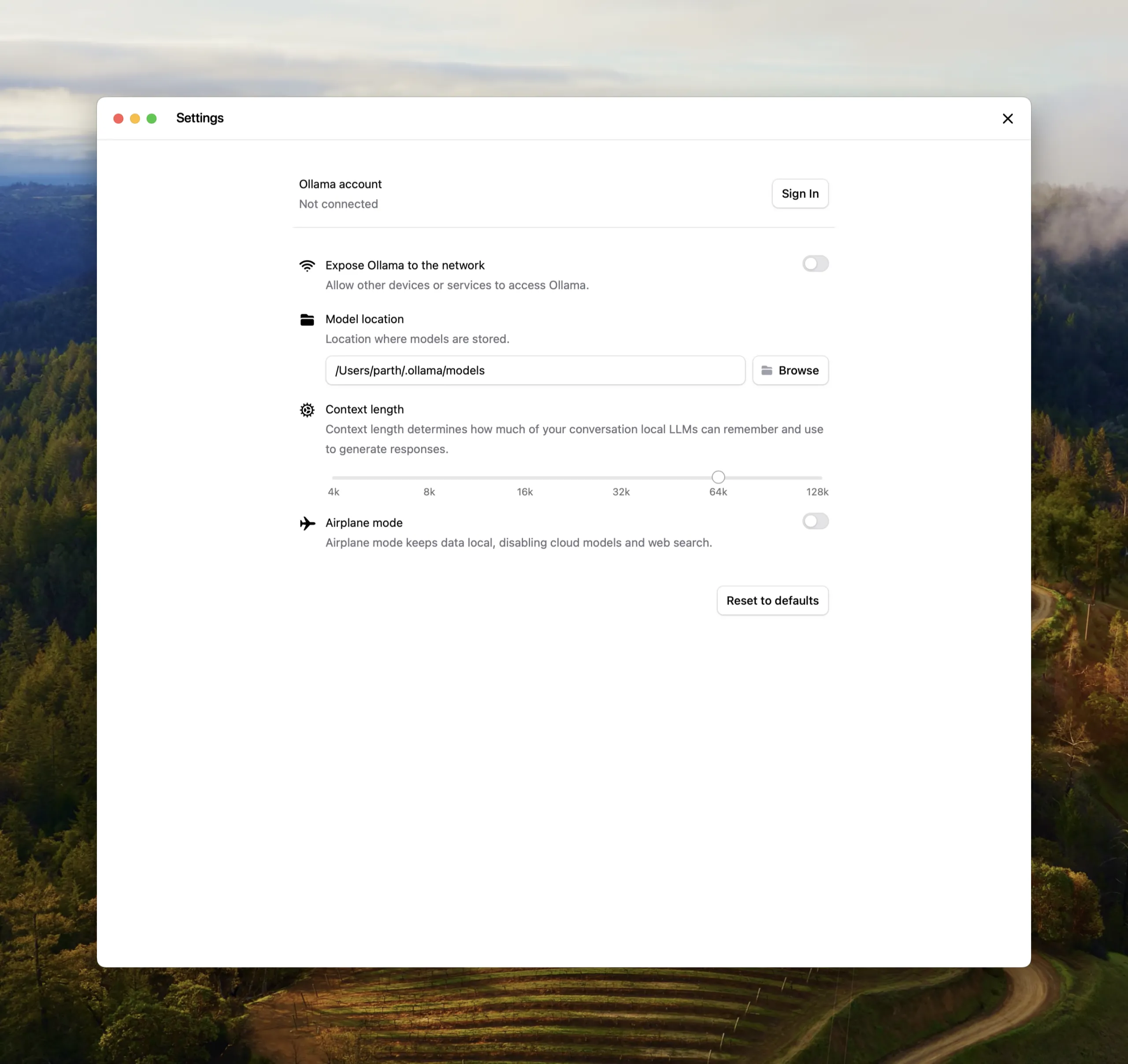

Ollama exposes a local REST API at http://localhost:11434 that is compatible with the OpenAI API format. This means you can point tools like Open WebUI, the Continue VS Code extension, or your own Python scripts at Ollama — just as you would a cloud provider, but with complete local control. Review the full Ollama API documentation on GitHub for advanced integration options.

Common Questions — Run LLMs Locally With Ollama

Q: Do I need a GPU to run LLMs locally with Ollama?

A: No, Ollama runs on CPU-only systems. Inference will be slower — a modern CPU with 16 GB RAM typically generates 5–15 tokens per second with a 7B model, which is usable for most tasks. An NVIDIA GPU with 8 GB+ VRAM accelerates this to 40+ tokens per second. Apple Silicon also delivers excellent performance through Metal GPU acceleration built into macOS.

Q: Is Ollama safe and private to use?

A: Yes. Once a model is downloaded, all inference runs entirely on your local hardware. No prompts, responses, or conversation data are transmitted to any external server. This makes Ollama one of the safest AI tools available for sensitive use cases involving personal, medical, legal, or proprietary information.

Q: Which LLM should a beginner start with on Ollama?

A: Start with Llama 3.3 8B if you have 8 GB RAM — it delivers the best all-around balance of capability and speed for general use. For machines with only 4 GB RAM, try Phi-4-mini (3.8B), which achieves strong results on math and reasoning despite its small size. For multilingual tasks or coding, Qwen 3 7B is the stronger choice.

Q: Can I use Ollama with third-party apps and UIs?

A: Absolutely. Ollama’s local API at http://localhost:11434 is OpenAI-compatible, which means hundreds of existing tools work with it out of the box. Popular options include Open WebUI (a full browser-based chat interface), the Continue extension for VS Code and JetBrains, and any Python script using the ollama Python library available on PyPI.

Conclusion

Running LLMs locally is no longer reserved for researchers or users with expensive hardware. With Ollama, any modern laptop or desktop can host a capable AI model — privately, offline, and completely free.

Three key takeaways: a 7B model runs comfortably on 8 GB RAM, the entire setup takes under five minutes from download to first chat, and the privacy and cost advantages over cloud AI are immediate. You own your data from the first prompt.

Ready to go deeper? Explore our AI coverage for the latest open-source model releases, benchmarks, and comparisons — updated regularly as the local AI ecosystem evolves.

Last Updated: April 15, 2026