Table of Contents

Key Takeaways

- Microsoft’s new MCP security push matters because Model Context Protocol makes tool calls easy, but it does not decide whether a tool call should be allowed before it runs.

- The biggest immediate risks are not abstract AI fear stories: they include tool poisoning, over-permissioned actions, and patchable SDK issues such as the MCP Inspector RCE and Python SDK DNS rebinding flaw cited in the ecosystem.

- If your team is experimenting with Cursor, Copilot, Claude, or custom agents on top of MCP, the right move now is a governance checklist: limit tool scope, patch MCP tooling, log every call, and deny dangerous actions by default.

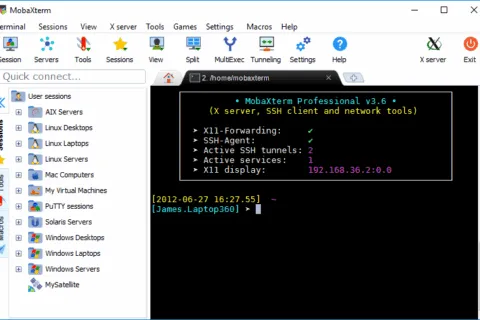

MCP security checklist is the real story behind Microsoft’s April 22 announcement, not just the launch of another developer toolkit. In its official post, Microsoft argued that MCP needs a policy checkpoint before execution because tool definitions go straight to the model and tool servers can be hosted by anyone. That gap matters more as MCP adoption spreads across agent workflows, a trend Hubkub already covered in our MCP growth explainer.

Hubkub’s winnable angle is not to rewrite Microsoft’s blog. It is to answer the practical search question developers and technical leads will ask next: what should we change right now if our agents can call tools, APIs, file systems, or third-party services? The short answer is to treat MCP like an execution layer that needs policy, identity, audit logs, and a deny-by-default stance before you let it touch production data.

What changed in Microsoft’s MCP security push?

Microsoft’s message is straightforward: MCP made tool discovery easy, but it left a governance gap between “the model chose a tool” and “the call was actually allowed.” To address that gap, Microsoft introduced the open-source Agent Governance Toolkit in public preview. The project sits between an agent framework and the actions that agent takes, then evaluates each tool call against deterministic policy before execution.

That distinction matters. Prompt instructions can tell an agent to behave, but Microsoft’s own red-team benchmark reported a 26.67% policy violation rate when safety relied on prompt-only instructions. The toolkit’s pitch is that policy should be enforced at the application layer instead of trusting the model to self-police. For teams already reading Hubkub’s AI agent security deep dive, this is the missing operations layer: a control plane, not another prompt trick.

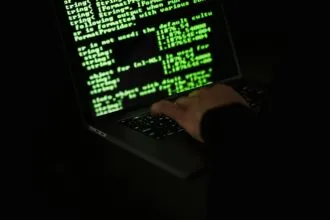

Why is MCP risky without a control plane?

MCP is attractive because it gives agents a consistent way to call tools. The same convenience also expands the blast radius when governance is weak. Microsoft links that risk to the OWASP MCP Top 10 and cites two concrete NVD-tracked examples: CVE-2025-49596, a reported unauthenticated RCE in MCP Inspector with a CVSS 9.4 score, and CVE-2025-66416, a DNS rebinding issue in the Python SDK scored 7.6.

Those CVEs are implementation bugs, not proof that MCP is unusable. But they show why technical teams should stop treating agent tool execution as a toy layer. If an agent can read files, hit production APIs, create tickets, or touch cloud infrastructure, weak governance turns a helpful assistant into an unreviewed automation path. That risk also affects teams using agent-first environments such as Cursor 3.0 or hybrid coding workflows described in our Cursor guide.

| Risk area | What can go wrong | What to do now |

|---|---|---|

| Tool poisoning | Malicious tool descriptions push the model toward unsafe actions or data exposure. | Allow only approved tool servers and review tool descriptions before rollout. |

| Over-permissioned tools | An agent can call file, shell, or admin tools with more scope than the task needs. | Use least-privilege policies and deny dangerous actions by default. |

| Patch lag | Known SDK or inspector flaws stay live in labs that later get connected to real systems. | Patch MCP Inspector and SDK dependencies before expanding usage. |

| No audit trail | Teams cannot prove which agent called which tool with which arguments. | Log every tool call and retain execution evidence for review. |

What should teams do now if they use MCP?

The safest near-term response is a short operational checklist, not a platform migration panic. Start with these steps:

- Inventory every MCP-connected tool your team is already testing, including internal prototypes.

- Patch known MCP tooling issues before exposing those tools to sensitive systems or shared environments.

- Create allow/deny policies for tool names, argument patterns, identities, and environments.

- Separate dev and prod tool servers so experiments cannot quietly inherit production access.

- Log and review tool calls the same way you would review CI/CD automation or privileged API activity.

- Block high-risk actions by default such as shell execution, destructive writes, secret access, and unrestricted external calls.

If you are still early, keep the rule simple: an MCP server should not get production-grade trust just because it works in a demo. Microsoft’s toolkit is useful because it reframes the question from “does the model understand the rules?” to “does the runtime enforce the rules?” That is a far better fit for teams that already think in terms of IAM, auditability, and controlled deployment stages.

How is Agent Governance Toolkit different from prompt guardrails?

The practical difference is determinism. Prompt guardrails ask the model to behave. Governance layers decide whether the action is permitted before the action runs. In the Agent Governance Toolkit README, Microsoft describes the project as runtime governance for AI agents with deterministic policy enforcement, zero-trust identity, execution sandboxing, and auditability across multiple frameworks including OpenAI Agents, LangChain, CrewAI, Azure AI, and more.

That makes AGT more relevant to technical leads than to casual chatbot users. If your workflow never lets an AI system touch tools, files, or production APIs, this story is less urgent. But if you are moving toward coding agents, internal copilots, or tool-using automation, governance becomes part of the architecture. That is why this topic fits Hubkub’s coding-agent cluster better than a generic launch recap.

Who should act first and what can wait?

Act first if your agents can touch code repositories, local shells, ticketing systems, documents, customer data, or cloud resources. Those teams need policy, patching, and audit logs now. Act next if you are still in experimentation but plan to connect more tools soon. You can wait if you are only reading vendor demos and have not exposed tool execution to real systems yet, but even then this is the right time to design your policy model before habits harden.

Common Questions — MCP security and agent tool execution

Q: What is the biggest MCP security mistake right now?

A: The biggest mistake is treating tool execution as a prompt problem instead of an enforcement problem. If an agent can call tools, your team needs explicit policy checks, scoped permissions, and logs before those calls run.

Q: Does Microsoft’s announcement mean MCP is insecure by design?

A: No. Microsoft’s point is that MCP standardizes how tools are discovered and called, but it does not define governance by itself. Teams still need runtime policy, patching, and operational controls around that protocol.

Q: Should small teams care about MCP governance too?

A: Yes, especially if a small team moves fast and lets AI tools touch repos, terminals, or SaaS APIs. Smaller teams usually have fewer review layers, so least-privilege defaults and audit logs matter even more.

Q: What should I read next if I am building coding-agent workflows?

A: Start with Hubkub’s MCP explainer for ecosystem context, then review our AI agent security guide, and finally compare practical coding-agent tools such as Cursor before you expand tool access.

The bottom line: Microsoft did not just publish another agent-framework update. It highlighted a real execution gap in the MCP era. If your agents can use tools, the safe move is not to trust prompts harder. It is to patch the MCP stack, narrow permissions, and add a policy checkpoint before the model gets to act. For the broader workflow context, continue with our MCP protocol explainer and our guide to AI agent security risks.