Table of Contents

Key takeaways

- This article summarizes the practical impact of Neuro-Symbolic AI Cuts Energy Use by 100x in Tufts Study for readers tracking AI and technology changes.

- Focus on confirmed details first, then treat predictions or market impact as analysis rather than settled fact.

- Use the related Hubkub guides below when you need setup steps, comparisons, or a deeper explainer.

Global AI data centers consumed 415 terawatt hours of electricity in 2024 — more than the entire annual output of many national grids. That figure is on track to nearly double by 2030. Now, researchers at Tufts University have demonstrated a fundamentally different approach that could cut that energy bill by up to 100 times, while simultaneously improving accuracy. The technique is called neuro-symbolic AI, and it merges two long-separated branches of computer science into a single, efficient system. This article breaks down exactly what Tufts found, how the technology works, and what it means for anyone building or deploying AI today.

What Is Neuro-Symbolic AI and Why AI Energy Efficiency Matters

Modern AI systems like large language models rely almost entirely on neural networks — systems trained on vast datasets to recognize patterns. They are powerful, but they consume enormous amounts of electricity. Training a single frontier model can use as much energy as dozens of homes consume in a year.

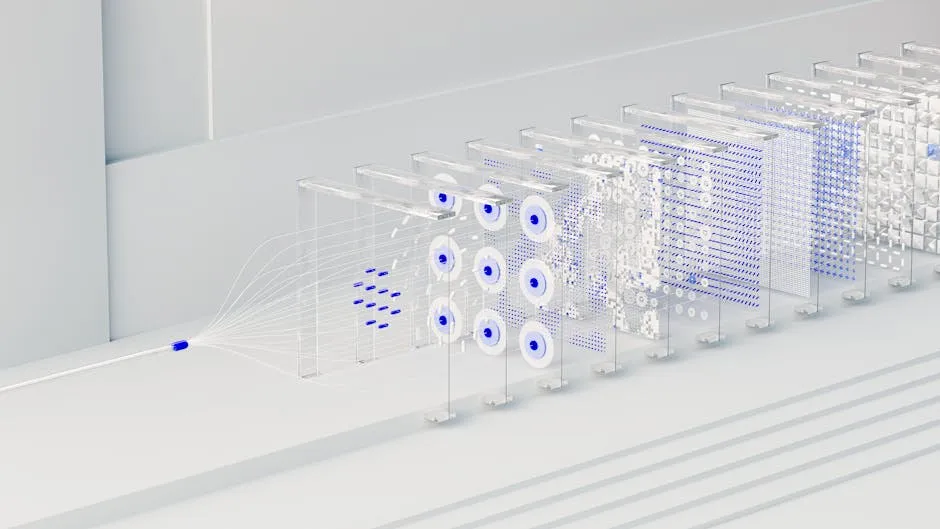

Neuro-symbolic AI takes a different route. It combines neural networks with symbolic reasoning — the rule-based, logic-driven approach that defined early AI research. Symbolic systems work like structured human reasoning: they break problems into categories, follow explicit rules, and plan step by step, rather than approximating every decision through billions of parameters.

How the Hybrid Architecture Works

The neural component handles perception — it looks at a scene, identifies objects, and estimates positions. The symbolic component then applies logical rules to plan a sequence of actions. Think of the difference between a chess player memorizing thousands of positions versus one who understands underlying principles. The principled player needs far less memorization and makes stronger decisions on positions they have never seen. For more on how hybrid AI approaches are reshaping the field, see the latest AI news on Hubkub.

The Tufts Breakthrough: Key Numbers from the Study

The research comes from the Human-Robot Interaction Laboratory at Tufts University, led by Matthias Scheutz, Karol Family Applied Technology Professor. His team compared their neuro-symbolic system against a standard visual-language-action (VLA) model — the type of AI powering many next-generation robots.

The benchmark was the Tower of Hanoi puzzle, a classic test of structured, sequential reasoning. The results were stark:

- Success rate (standard puzzle): Neuro-symbolic VLA 95% vs. standard VLA 34%

- Success rate (novel, unseen variant): Neuro-symbolic VLA 78% vs. standard VLA 0%

- Training time: 34 minutes vs. over 36 hours

- Training energy use: Only 1% of what the standard VLA required

- Operational energy use: Only 5% of the energy consumed during task execution

The neuro-symbolic model did not just win on efficiency — it won on every metric. A system that has never seen a particular puzzle configuration still solved it 78% of the time. The standard model failed every single attempt on the same novel variant. The research is set for presentation at the International Conference on Robotics and Automation (ICRA) in Vienna in June 2026.

What This Means for AI’s Growing Energy Crisis

The IEA’s Energy and AI report projects global data center electricity consumption will reach roughly 945 terawatt hours by 2030 — nearly 3% of all global electricity use. AI-driven accelerated servers are already growing at 30% annually. These figures make architectural efficiency one of the most consequential engineering decisions of this decade.

Neuro-symbolic approaches will not replace large language models overnight. The Tufts study was conducted in simulation and focused on structured robotic manipulation, not the full range of workloads driving data center expansion. But it makes a critical point: for constrained, well-defined tasks — robotics, logistics, quality control, medical diagnostics — the choice of architecture determines energy costs by orders of magnitude.

For Southeast Asian economies experiencing rapid AI adoption — from Thailand’s manufacturing sector to Singapore’s financial services industry — the energy implications are particularly significant. Regional power grids face growing pressure as AI workloads scale. A 100x reduction in energy per robotic task is not a marginal improvement; it is the difference between AI deployment being economically viable or prohibitively expensive at scale.

Here is what organizations deploying AI should take from this research:

- Match the architecture to the task. Not every AI problem needs a 70-billion-parameter model. Neuro-symbolic systems excel where tasks have clear logical structure.

- Expect faster iteration cycles. Training in 34 minutes instead of 36 hours means engineers can run hundreds of experiments while a standard VLA completes one.

- Generalization is the real prize. A model that performs at 78% on unseen tasks is far more deployable than one hitting 95% only on its training distribution.

- Energy costs are a business decision. As electricity prices and carbon reporting requirements grow, the energy profile of an AI system directly affects operational cost and regulatory exposure.

Common Questions — Neuro-Symbolic AI

Q: What is neuro-symbolic AI in simple terms?

A: Neuro-symbolic AI combines neural networks — which learn patterns from data — with symbolic reasoning, which applies logical rules and abstract concepts. The result is a hybrid system that can perceive the world through data and reason about it through structured logic. This makes it more efficient and far better at generalizing to situations it has never encountered before.

Q: How much energy does neuro-symbolic AI save compared to standard models?

A: In the Tufts University study, the neuro-symbolic model used only 1% of the energy to train a standard VLA model. During operation, it used only 5% of the equivalent energy. Combined across both phases, the system achieves roughly 100 times the energy efficiency for robotic tasks.

Q: Is neuro-symbolic AI ready for real-world deployment in 2026?

A: The Tufts research was conducted in simulation on structured robotic tasks, so it is not yet a universal replacement for all AI workloads. However, for well-defined industrial and robotic applications — where the logical structure is predictable — the approach is mature enough for serious evaluation and pilots. The ICRA 2026 presentation will bring it to the global robotics community.

Q: How does AI energy consumption affect everyday users?

A: AI data centers compete for electricity on regional grids, driving up energy costs and straining infrastructure. This is especially acute in regions with high AI investment like the United States, Europe, and Southeast Asia. The IEA projects data center demand to nearly double by 2030. More efficient AI architectures reduce this pressure and help control the long-term cost of AI services.

Conclusion

Tufts University’s neuro-symbolic AI research delivers three clear takeaways. First, combining neural perception with symbolic reasoning can reduce AI energy use by up to 100 times without sacrificing — and often improving — accuracy. Second, the approach enables dramatically faster training, with 34-minute runs replacing 36-hour sessions. Third, neuro-symbolic systems demonstrate superior generalization, succeeding on unseen tasks where standard models fail entirely. As AI power demand heads toward near-doubling by 2030, this kind of architectural innovation is not optional — it is essential. For more on how these developments shape tech infrastructure globally, explore our Deep Dive section.

Last Updated: April 13, 2026